A mental model is a pattern in reality that shows up again and again. Once you recognize one, it starts to pop-up everywhere. Understand how it works, and you can recognize something deep about the universe.

Here are the ten mental models that have impacted my thinking the most:

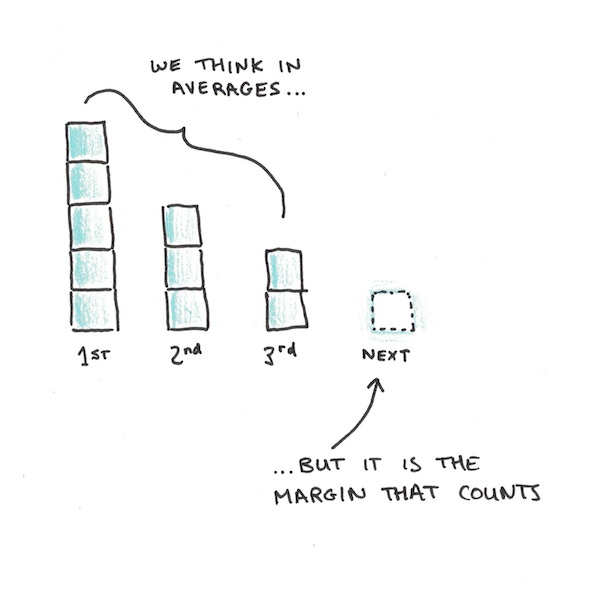

1. The Margin

We think in averages, but reality works on margins.

Economists like to talk about marginal changes. What that means is how much it takes to do one more. Why it matters is that the cost of doing something is rarely constant.

Marginal benefits tend to experience diminishing returns. Eating the twentieth piece of cake isn’t as good as the first. Marginal costs, in contrast, can often decline. Writing the twentieth essay takes less effort than the first, because it gets easier with practice.

The mistake is to think in averages. We ask how much it was in the past, rather than asking how much the next one will likely be.

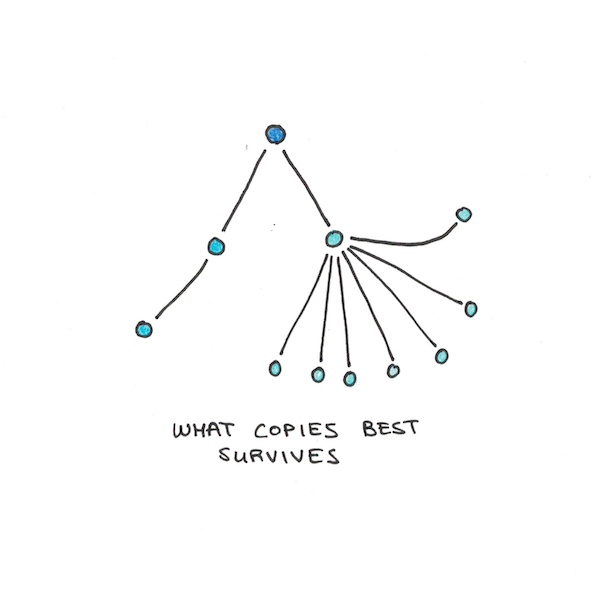

2. Natural Selection

Darwin didn’t just explain the origin of the species. He showed how any useful complexity can be generated mechanically.

All you need is faithful copying which preserves infrequent mistakes, and for the content of what is copied to have some influence on its own survival. This applies not only to life but to ideas, technology and traditions.

Understanding natural selection can tell you a lot about which things will spread. It can explain why diseases tend to get less deadly as they infect more people. It shows why news on social media provokes the most outrage. We can even adapt it to explain how cultures develop over time.

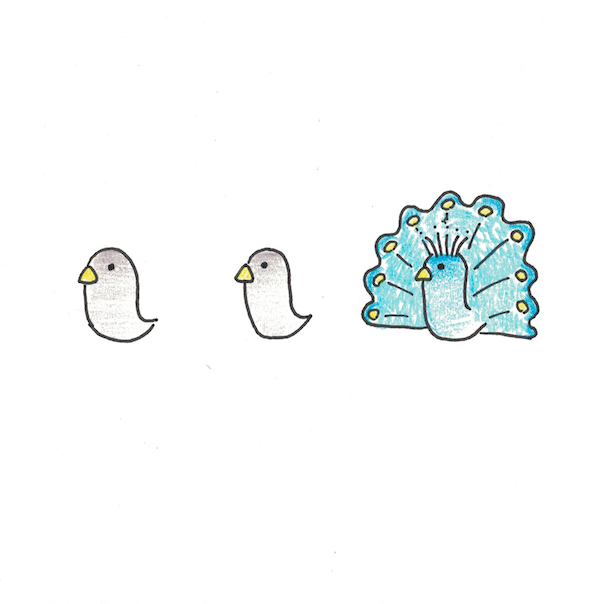

3. Signaling

Why is hotel bedding white? Why do we pay more for medicine than prevention? Why would most people rather have credentials than an education? The answer is signaling.

Signaling is taking visible actions to change what other people think. The fact that you can lie, creates strange incentives for would-be signallers to choose things that make lying harder. Bedsheets in a hotel are white, typically, because patterned sheets hide stains. If you’re worried about cleanliness, a pristine white sheet is harder to fake.

We tend to be suspicious of signaling, as it feels less authentic. But it is likely embedded into our psychology at a very basic level. Much of our behavior may be optimized for sending signals, rather than merely reaching direct ends.

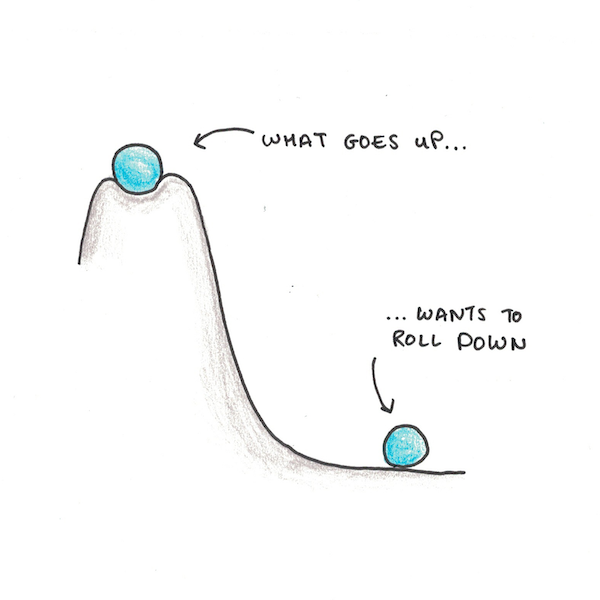

4. Potential

Things fall down. Potential is a physical concept that extends the notion of height. A ball high in the sky has gravitational potential. Let it go and it will fall down.

Voltage is the analogous quantity for electricity. A high voltage wire is like the ball high up–it wants to fall down to a lower potential. Put a machine in between and you can get useful work done.

The idea of a potential is more useful than just physics. Machine learning uses gradient descent, the mathematical equivalent of trying to roll down a high-dimensional hill. I’ve argued that many habits might be metastable, invoking the metaphor of falling down to explain why few habits last forever.

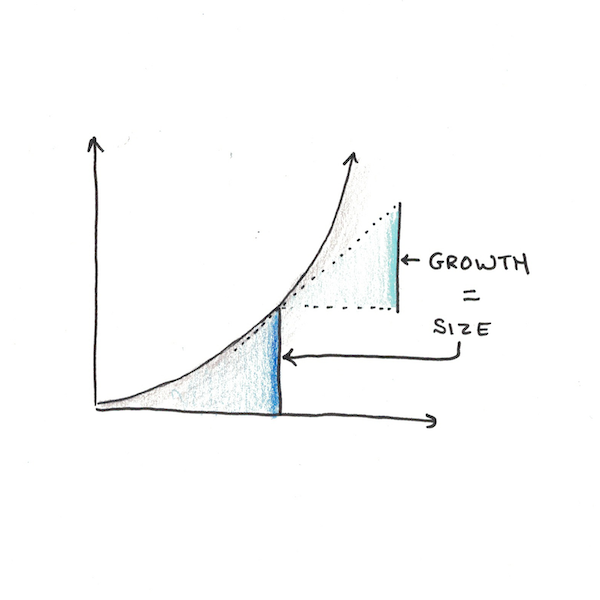

5. Compounding

Exponential growth is both rare and incredibly powerful.

What makes compounding work is when the rate of growth depends on the amount you already have. Money compounds because each dollar you already have contributes interest. Bunnies left unchecked multiply exponentially because each pair creates more bunnies.

We aren’t built to think in terms of exponential growth. When it occurs, we’re often taken by surprise.

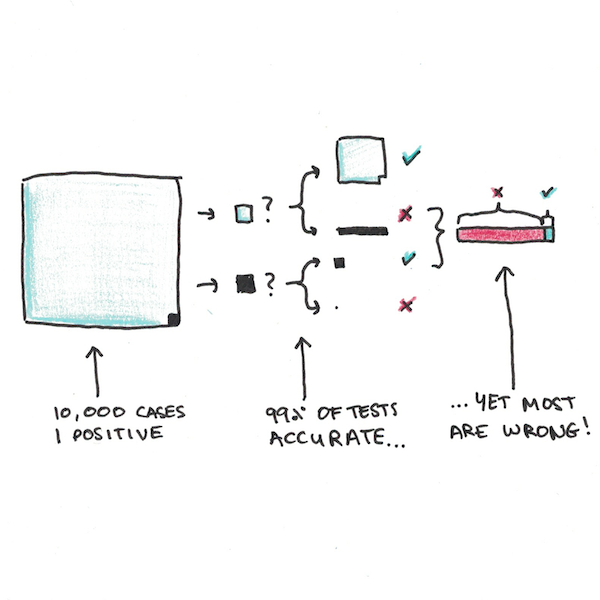

6. Bayes’ Rule

Imagine you’re a doctor testing for a rare disease. Only one in ten thousand people have it. You check for the disease with a test that only makes a mistake one out every hundred tests. A patient comes in, you run the test and get the answer: positive.

Question: What’s the likelihood the patient has the disease?

If you said 99% you’re not alone. Most doctors get similar questions wrong too. But they’re wrong.

They’re wrong because they failed to use this mental model. Whenever you get new information (say a test result) you need to adjust it to the background information you already have—don’t start from scratch.

If we tested 10,000 people with the test we mentioned, on average the 9999 people who don’t have the disease would get 9899 negatives and 99 positives, with the one, true positive. Thus a 99% accurate test ends up being wrong in nearly 99% of cases!

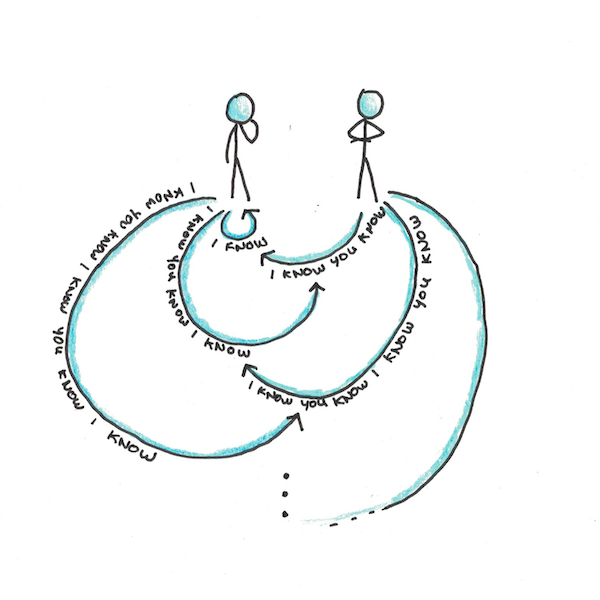

7. Common Knowledge

Common knowledge are the things everybody knows. But not just that, they’re the things everybody knows that everybody knows. Even more, everybody knows that everybody knows that everybody knows. Repeat ad infinitum and you have common knowledge.

The best illustration of common knowledge is in The Emperor’s New Clothes. The emperor is naked and everybody knows he’s naked. But because it’s not common knowledge that he’s naked, so everybody plays along and acts like he’s dressed fabulously. It takes an innocent child to speak aloud what was already in everybody’s minds.

Common knowledge matters because it can create stable points around arbitrary decisions. Money, for instance, is valuable because everybody agrees it has value. If the value of money weren’t common knowledge, it wouldn’t work as money.

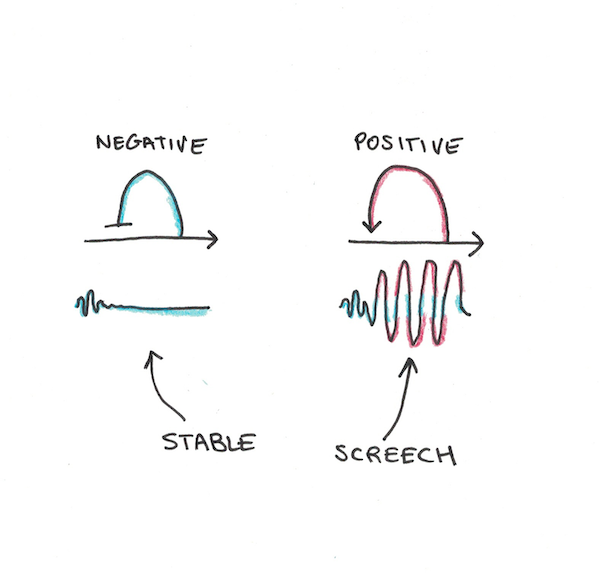

8. Feedback

Feedback is just one pattern in a broader collection of systems thinking.

Systems thinking applies to neuroscience, molecular biology, politics, economics and far more. The idea is that abstract flows of information can lead to complex behavior that analyzing the parts alone couldn’t predict.

Feedback can come in two flavors: positive and negative. Negative feedback is when an output dampens its own input. The result is stability. Positive feedback is when the output amplifies the input. The result is an explosion that depends on the starting point.

Much of our behavior is governed by negative feedback. You’re hungry, you eat and feel less hungry. You work really hard, feel satisfied and take a break. But other loops are positive—success can bring confidence, which brings more success. Fear can lead to avoidance, which reinforces anxiety. Knowing which can make a huge difference.

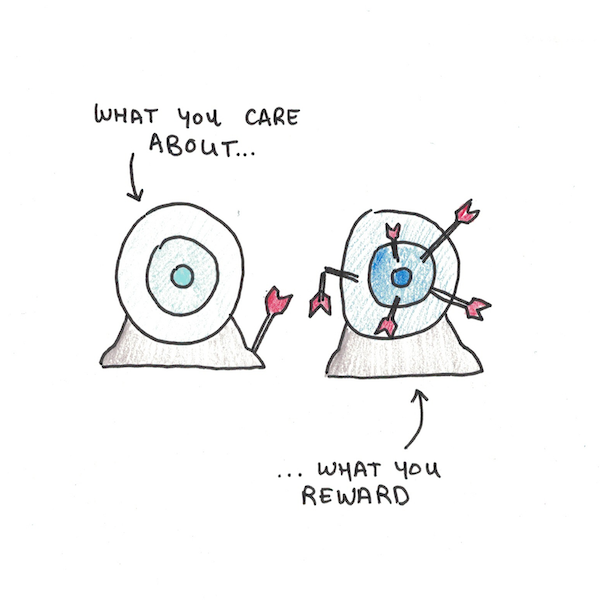

9. Incentives

Suppose we added a new event to the Olympics. It was part 100-meter dash, part beauty pageant. Now imagine that, regardless of how fast they run, the winners were always the best looking.

If such an event existed, would you be surprised if you observed that the contestants spent most their time on cosmetics and hairstyling, and little time on actually running?

This example is silly, but analogous situations exist in the real world. Professors are overwhelmingly judged on their research output, not their teaching ability. Is it any wonder then, that even for ruinously expensive universities, lectures are often uninspired?

Using this mental model involves backwards reasoning. Given what we see people do, what can we guess about the incentives that guide them? Better to notice this in advance, before you start training for a race that won’t matter.

10. Nash Equilibria

A Nash equilibrium, named after John Nash (they even made a movie about him), is a stable strategy for a multiplayer game.

Stable strategies mean that, knowing what everyone else is doing, you don’t want to do something different. An unstable strategy is, knowing what everyone else is doing, you want to change how you’ll play.

The Prisoner’s Dilemma is an example of an (unfortunate) Nash equilibrium. Snitch and you’ll get out of jail free, but your partner in crime will rot in prison. Keep quiet and you’ll both get a light sentence. The problem is that the good outcome (keeping quiet) is unstable. Regardless of what your opponent chooses, your best bet is to snitch.

Nash equilibria explain why social change is hard, even if everybody agrees. If polluting is easier than not, and the cost of pollution is shared, the Nash equilibrium is for everyone to pollute. This is true, even if everyone would rather live in a world without pollution.

The Value of Mental Models is Deep, Not Shallow

Being shown a new mental model isn’t enough. To get real value out of them, you need to understand them deeply. You need to see them in many contexts and get a feel for how they work.

But, if you hear an abstraction come up again and again, and you don’t understand it well, that’s a good sign there’s a hidden mental model waiting to be unlocked.

I'm a Wall Street Journal bestselling author, podcast host, computer programmer and an avid reader. Since 2006, I've published weekly essays on this website to help people like you learn and think better. My work has been featured in The New York Times, BBC, TEDx, Pocket, Business Insider and more. I don't promise I have all the answers, just a place to start.

I'm a Wall Street Journal bestselling author, podcast host, computer programmer and an avid reader. Since 2006, I've published weekly essays on this website to help people like you learn and think better. My work has been featured in The New York Times, BBC, TEDx, Pocket, Business Insider and more. I don't promise I have all the answers, just a place to start.