I often don’t feel smart enough to do what I do.

I don’t say this out of false modesty or as an attempt to be more relatable. I spent the last decade taking on rather-immodest learning challenges. I’ve always been confident in my ability to learn new things.

Instead, the insecurity comes from recognizing that, no matter how hard I try, there are tons of people who know way more than me. Perhaps they, not I, should be the ones to write these essays?

I was reminded of this recently while reading Scott Alexander’s newsletter. He writes:

I often find myself trying to justify my existence; how can I write about science when I’m not a professional scientist, or philosophy when I’m not a professional philosopher, or politics when I’m not a professional policy wonk? When I’m in a good mood, I like to think it’s because I have something helpful to say about these topics. But when I’m in a bad mood, I think the best apology I can give for myself is that the discovery drive is part of what it is to be human, and I’m handling it more gracefully than some.

Alexander is extremely smart. He’s written some of my favorite essays. He’s able to quickly summarize deep research, reveal hidden patterns in our debates, and manages to be funny at the same time. As a writer, I’m envious.

Why should Alexander feel the need to apologize for his efforts to assemble new ideas? Why should you or I not feel smart enough to do the work we want to do, and what should we do about it?

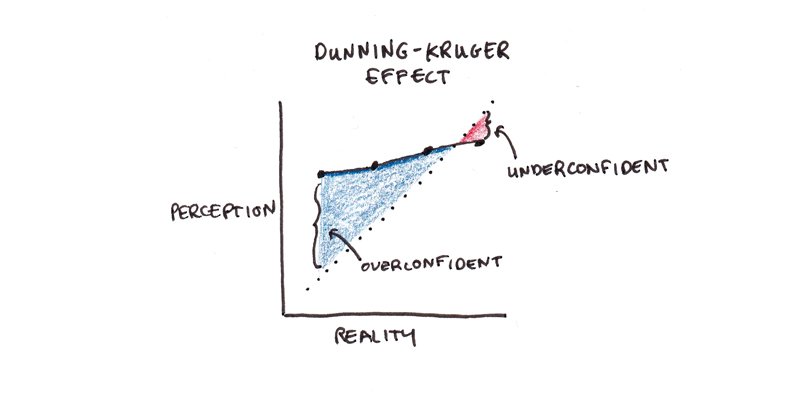

Dunning-Kruger Effect?

The popular explanation of this is the Dunning-Kruger effect. Those who are incompetent also cannot accurately self-assess their competence. As David Dunning explains:

“If you’re incompetent, you can’t know you’re incompetent … The skills you need to produce a right answer are exactly the skills you need to recognize what a right answer is.”

Bertrand Russell expressed a similar sentiment nearly a century ago: “The fundamental cause of the trouble is that in the modern world the stupid are cocksure while the intelligent are full of doubt.”

So that’s the problem? Smart people are insecure about their knowledge, and the ignorant are oblivious to their incompetence?

Except that’s not what the research actually shows. The study found that incompetent students overestimated their class rank, and top students underestimated theirs. However, incompetent students didn’t think they were better than good students.

[T]he bias is definitively not that incompetent people think they’re better than competent people. Rather, it’s that incompetent people think they’re much better than they actually are. But they typically still don’t think they’re quite as good as people who, you know, actually are good.

Did all those people who casually cited the Dunning-Kruger effect to mean “stupid people are cocksure” just fall victim to Dunning-Kruger? Or is something else going on here?

Digging Into Knowledge

My own experience with learning things tends to involve the following cycle:

- Read claim X.

- Read a bit more about claim X. It turns out the authors who proposed X didn’t actually say X, but X’. Also, some people think Y and Z with good reason. Nobody thinks Q.

- Actually, some people think Q. And X’ is contradicted by work done in a different field, except there it’s called P. Maybe X is right all along?

The expectation is that as you learn more and more, you’ll eventually hit a bedrock of irrefutable scientific fact. Except usually, the bottom of one’s investigation is muck. Some parts of the original idea get sharpened, others blur as more complications and nuance are introduced.

This experience doesn’t just apply to researching facts. When I started learning languages, I was more than happy to carry on a decent conversation. But, once I achieved it, I became self-conscious of all the things I couldn’t do well. I’d struggle to follow movies or read literature. Group conversations could be surprisingly challenging. Occasionally I’d bump into a seemingly basic situation that I couldn’t grasp. I’d think: “Wait… Am I actually terrible at this?”

Given my modest talents, it’s impossible to assert that this pattern continues forever. Perhaps there truly is a vaunted place of excellence on the other side, where doubts cease to exist and one is supremely self-confident. If such a place exists, I certainly haven’t found it.

Instead, I suspect that this squirming feeling of self-doubt is a persistent feature of learning. Speculating further, I’d suggest it arises from at least two principle causes: one inherent in the cognitive structure of knowledge and skills, and the other in the social comparison with expert performers.

Knowledge Structures and Social Comparisons

The first cause of this trouble seems to be in how knowledge works. Ability appears to result from accumulating increasingly nuanced patterns of discrimination, motor skill, and reasoning.

Consider learning to speak English. You first learn the word “good,” which applies to a whole range of situations. Later you learn shades of intensity: “okay,” “fine,” and “excellent.” As you gain more knowledge, you learn “stupendous,” “sublime,” and “stellar.”

Each word occupies a slightly different meaning. A space of discrimination previously described by “good” now gets subdivided into many overlapping sections. Blanks exist at each stage of the learning process—areas where you don’t have a good word to describe the situation. As you get more precise, the size of blanks appears to grow. Gaps that seemed negligible begin to look like chasms.

From a relative perspective, there is consistent progress. You always know more than you knew before. But it often doesn’t feel that way. Instead, learning more seems to make you feel more ignorant about everything else you don’t know.

The other effect of increasing knowledge is changing who you compare yourself to. When I started writing, I mostly read other advice writers and compared my work to theirs. Now that I spend most of my days reading original research, I’m comparing the quality of my thinking to a more rarefied intellectual strata. That comparison is not always favorable.

Dealing with Intellectual Insecurity

There seem to be two strategies for dealing with this kind of intellectual insecurity.

The first is to “leave it to the experts.” The relatively smart often apply this one to the relatively uninformed as a kind of browbeating. In other cases, it’s intended to try to remove noise from a discussion so those with the most experience can speak the loudest.

While I understand wanting to let the most-informed speak clearest, I have serious doubts about the consequences if this strategy were to be applied consistently.

Fields of expertise often develop insular intellectual cultures where questionable assumptions can get entrenched. While a failure to heed expertise is blamed for many of our current woes, an overconfidence in expertise may have produced some of the woes of the past. It’s not obvious, to me at least, that our overall credence in authorities is set too high or too low.

I am persuaded by Hugo Mercier and Dan Sperber’s account of reasoning. They argue that the best answers arrive not from individual rationality, but from open, deliberative processes. Reason, in this sense, functions more like a conversation than a solitary mental act. While individual voices may be wrong, the collective takeaway from the discussion is fairly good.

Still, there’s an obvious, opposite danger of intellectual egalitarianism: considering everybody’s opinions as equal, even if some people have spent years in careful study and others have not.

The second strategy, which I prefer, is to try to be honest yet humble. Do your best, given what you know. Be willing to change your mind. If many people who know more than you disagree, it’s a sign to stop and listen.

Perhaps it is a tad self-serving in light of my career as a writer of non-expert opinions, but my gut tells me that the right way to use one’s insecurity is as a drive to improve, not to halt inquiry. Be honest and do the best work you can, but don’t ever stop learning.

I'm a Wall Street Journal bestselling author, podcast host, computer programmer and an avid reader. Since 2006, I've published weekly essays on this website to help people like you learn and think better. My work has been featured in The New York Times, BBC, TEDx, Pocket, Business Insider and more. I don't promise I have all the answers, just a place to start.

I'm a Wall Street Journal bestselling author, podcast host, computer programmer and an avid reader. Since 2006, I've published weekly essays on this website to help people like you learn and think better. My work has been featured in The New York Times, BBC, TEDx, Pocket, Business Insider and more. I don't promise I have all the answers, just a place to start.